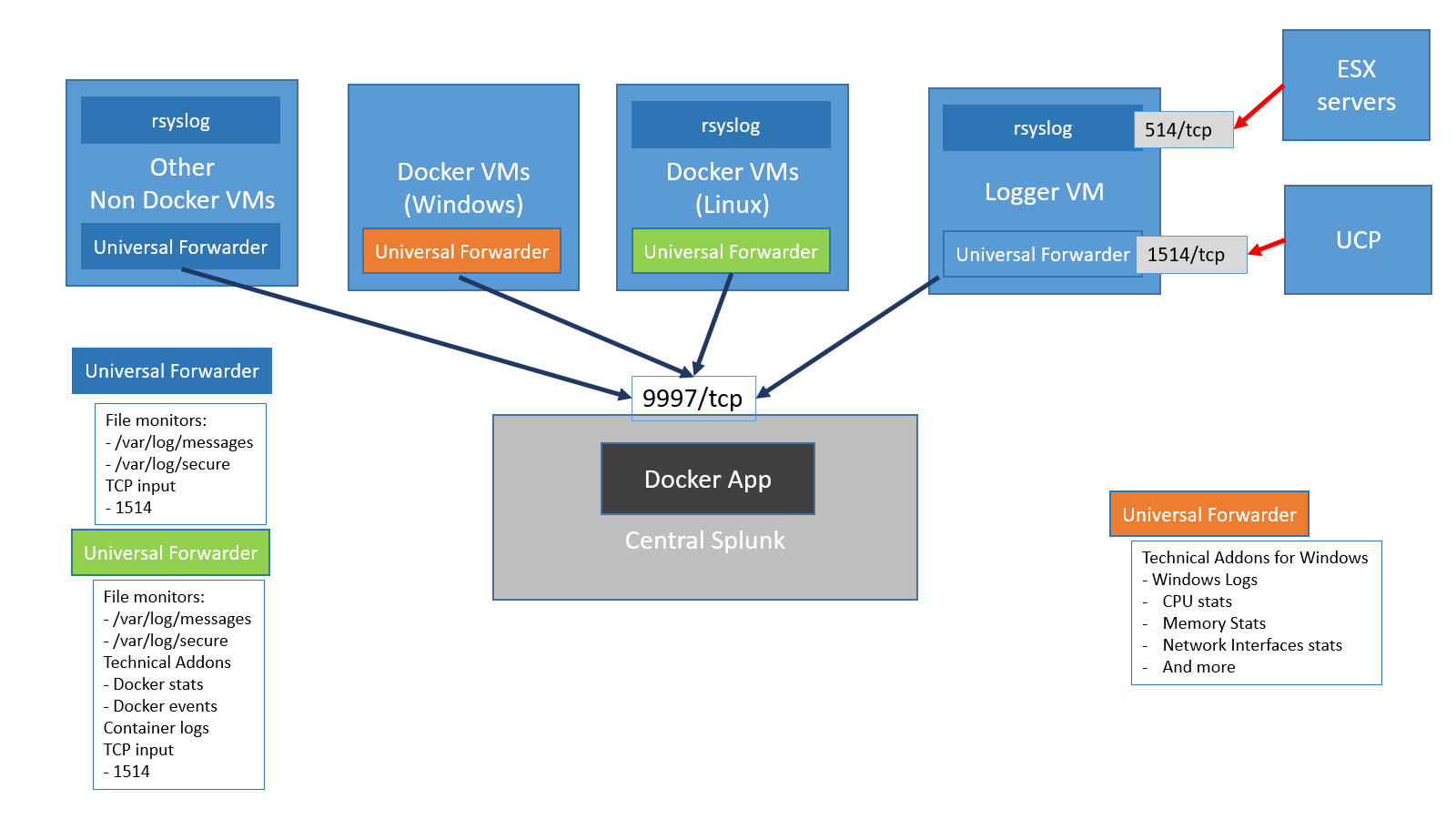

Supported topologies and installation paths: Ensure that the app binaries are available on the required DFS search heads and the search peers that you want to use as DFS workers. In an indexer cluster, the app must be deployed to all the members of the indexer cluster using the cluster master node. In a search head cluster, the app must be installed on all the search heads. The Splunk DFS Manager app must be installed on the search head and all the search peers that are a part of the DFS deployment. You can use the app irrespective of your deployment scenario and install Spark on a standalone search head, standalone indexer, search head cluster, or an indexer cluster. If you install your Spark cluster manually, Splunk isn't responsible for support or maintenance of the compute cluster.įor information on installing and configuring the app for Splunk DFS Manager, see Splunk Docs. Therefore, Splunk only provides support for a Spark cluster that is deployed using the Splunk DFS Manager app. The Splunk DFS Manager app offers the easiest way of deploying Spark automatically to run DFS searches irrespective of your search topology. Monitor the health and resource usage of the Spark cluster.

Restrict adding DFS workers to a particular site in a multi-site environment. Add DFS workers on all or selected search peers.

Customize resource usage by leveraging workload management (WLM) to allocate CPU and memory. High availability that allows an alternate search head captain to restart the Spark master, in case the search head captain fails. For search heads in a search head cluster:Install the app using a search head cluster deployer.ĥ) DFSmaster automatically starts on the search head (in standalone mode) and on the search head captain in a search head cluster.Ħ) Access the Splunk DFS manager UI on the search head that runs as the DFS master.ħ) Add the provisioned search peers as the DFS workers. For indexers in an indexer cluster: Install the app using a cluster master. Splunk DFS Manager installation and configuration:ģ) Add the nodes as distributed search peers to the search head.Ĥ) Install the app on the search head and search peers. Additionally, the app helps you to continuously and seamlessly manage, configure, and monitor your Spark cluster and customize the resource allocation within your DFS deployment from any search head through a scalable and adaptive user interface to run a DFS search. The app automatically bundles, installs, and configures Apache Spark that is required for DFS. With vulnerability management it's always about assessing risks.The Splunk DFS Manager app enables you to run Data Fabric Search (DFS).

If you're just monitoring your internal infrastructure the risk is much lower next to negligible depending on your organization. If you were monitoring a completely unknown dbserver somewhere on the internet, the risk would be significant. So even though splunk's dbconnect is written in java and probably (haven't checked it) uses log4j for logging, the possible attack vector would need data manipulation on the monitored database server side. The vulnerability is so serious in global scale because of two factors:ġ) ubiquity of log4j - it's the most common logging framework for java used across many many solutions written in javaĢ) many of those solutions are public-facing and they do log user-supplied data by design (like access-logs). If you use log4j but only ever log static messages or generate dynamic logs but never use any user-supplied data for it and you fully control the contents of your logs at all times you have nothing to worry about. Are you sure that TA for Tomcat utilizes log4j? Whatever for? It's supposed to parse logs, not generate them.Īnyway, just because some solution uses the vulnerable component doesn't mean that it's used in a vulnerable way.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed